The Glass Box: A Transparency Framework for AI-Driven Monetization

Co-authored with Nick Thor

The integration of Artificial Intelligence (and Generative AI) into the advertising technology ecosystem promises a future of unprecedented efficiency, optimization, and revenue growth. From automating media plans to dynamically allocating inventory, AI is poised to redefine the pitch-to-pay lifecycle. However, this power comes with a critical challenge: the “Black Box” problem. As AI models become more complex and intelligent, their decision-making processes can become opaque, creating significant risks for media companies, including hidden algorithmic biases, regulatory compliance issues, and a fundamental lack of trust from users and clients alike. Without transparency, the promise of AI is undermined by uncertainty.

Operative considers and addresses this challenge with Adeline, an assistive AI platform built on a foundation of transparency and security. This article introduces Adeline AI as “The Glass Box,” a comprehensive framework for building and deploying trustworthy AI in media monetization. This framework is not an abstract concept but is deeply embedded in Adeline’s architecture and functionality.

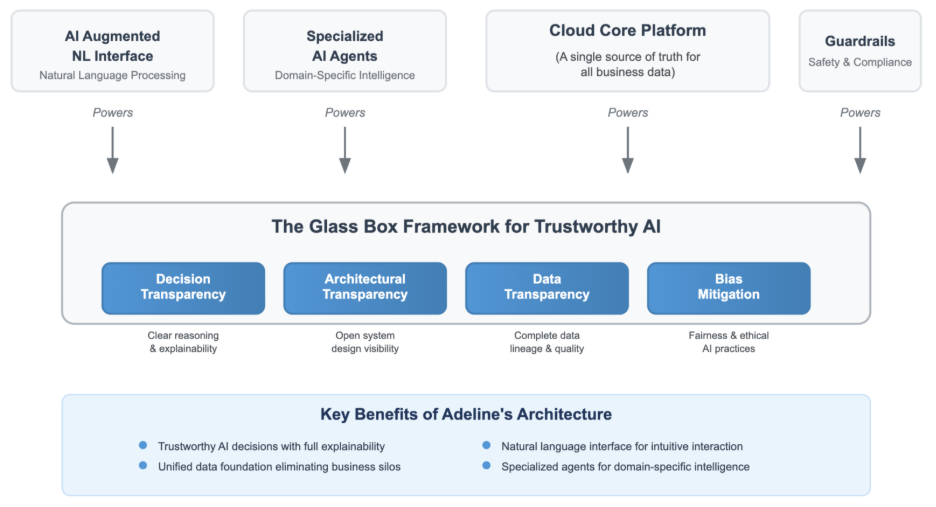

Adeline is developed keeping human intelligence and importance in consideration, it aims to equip humans with better tools to enable humans to focus on more strategic objectives. It is built on four essential pillars designed to provide clarity, accountability, and control at every stage of the AI-driven workflow:

- Architectural Transparency (The Blueprint)

Adeline’s modular, agent-based design deconstructs complex processes into understandable, auditable components. Specialized AI agents, orchestrated by an Agentic Workflow, provide clear functional boundaries, making the system inherently more interpretable than monolithic AI models. - Data Transparency (The Foundation)

Powered by CloudCore, Adeline operates from a unified, governed, and auditable data foundation. By creating a single source of truth across platforms like AOS, Operative One, OnAir, and IBMS, this pillar ensures data integrity and provides a clear lineage for the information fueling AI decisions. - Decision Transparency (The Explanation)

Adeline is designed for explainability. Through its AI Augmented Natural Language Interface, users can interrogate the system, asking why it made a specific recommendation. This, combined with a “human-in-the-loop” philosophy, ensures that users can understand, validate, and override AI-generated outputs. - Bias Mitigation (The Guardrails)

The Glass Box framework includes proactive measures to identify and correct algorithmic bias across Linear TV, CTV, and Digital inventory. By leveraging a holistic dataset and enabling continuous human oversight, Adeline provides the tools to ensure fairer and more equitable media monetization.

This article will detail each pillar of The Glass Box framework, demonstrating how Adeline’s design transforms the black box of AI into a transparent, trusted, and indispensable asset for the modern media enterprise.

Introduction: The Black Box Dilemma in AdTech

The media industry is at a pivotal moment. The convergence of Linear TV, CTV, and Digital advertising has created immense operational complexity, which AI is uniquely positioned to solve. AI promises to automate the creation of “infinitely-optimized” media plans, forecast audience behaviour with greater precision, and maximize the value of every single ad impression.

However, as media companies integrate these powerful tools, they are confronting a critical barrier to adoption: the “black box.” When an AI system recommends a specific media mix or pricing strategy, stakeholders are often left with more questions than answers. How did it arrive at this conclusion? What data did it use? Could it be perpetuating historical biases?

This opacity is not merely a technical issue; it is a significant business risk.

- Algorithmic Bias: An AI model trained on historical data might inadvertently learn and amplify societal biases, leading to discriminatory ad delivery that excludes certain demographics from seeing housing, employment, or credit opportunities, exposing companies to legal and reputational damage.

- Lack of User Trust: If sales and operations teams do not understand or trust the recommendations of an AI tool, adoption will falter. They will revert to manual processes, negating the technology’s value and hindering efficiency gains.

- Regulatory Scrutiny: As regulations like GDPR evolve, the demand for algorithmic accountability is increasing. Companies must be able to explain and justify the decisions made by their automated systems to regulators and consumers.

To unlock the true potential of AI, media companies need more than just powerful algorithms; they need a framework for trust. They need a "Glass Box."

The following diagram illustrates the four core pillars of Operative’s Glass Box framework, which are powered by Adeline’s underlying technology to deliver a trustworthy AI solution.

Pillar 1: Architectural Transparency (The Blueprint)

The foundation of a trustworthy AI system lies in its design. A monolithic “black box” AI, where a single, massive model handles every task, is inherently difficult to debug, audit, and understand. Adeline’s agentic architecture provides a transparent alternative by breaking down complex business processes into a collaborative network of specialized, understandable AI agents.

The Role of Specialized Agents and Agentic Workflow

Instead of one AI trying to do everything, Adeline employs a team of distinct agents, each an expert in a specific task within the complex media workflow. For example, when processing an RFP, the Media Planning Agent doesn’t work alone; it orchestrates a series of sub-specialists:

- A Product Selection Agent intelligently analyses the RFP to identify the most appropriate products from the media company’s catalogue, even when the advertiser’s goals are implied rather than explicitly stated.

- A Planner Preference Agent comprehends and applies the unique guidelines, historical preferences, and strategic nuances of human media planners, ensuring the AI’s output is consistent with their expert approach.

- A Targeting Agent tackles the difficult and critical task of identifying and assigning the optimal audience targets and options to each line item in a digital media plan.

- A Budget Allocation Agent analyses the advertiser’s needs and historical data to intelligently distribute the budget across various plan lines and verticals, creating a truly converged and optimized proposal.

Other specialized agents, like the Content Rights Agent or the Campaign Performance Agent, handle their respective domains with similar focus and expertise.

Each agent has a clearly defined “action space” and purpose, making its function transparent and its performance individually measurable. These specialized agents are coordinated by the Agentic Workflow, an orchestration engine that sequences tasks and delegates them to the appropriate agent.

This modular design is the first layer of the Glass Box. When a user asks Adeline to create a proposal, they are not interacting with an unknowable oracle. They are initiating a transparent, traceable workflow: the orchestrator invokes the Media Planning Agent, which uses tools to access data, consults its memory for context, and generates a result. If a problem arises, it’s possible to isolate which agent or step in the workflow is responsible, making the system far easier to debug and audit. This architectural clarity builds confidence and provides a clear blueprint of the system’s operations.

Pillar 2: Data Transparency (The Foundation)

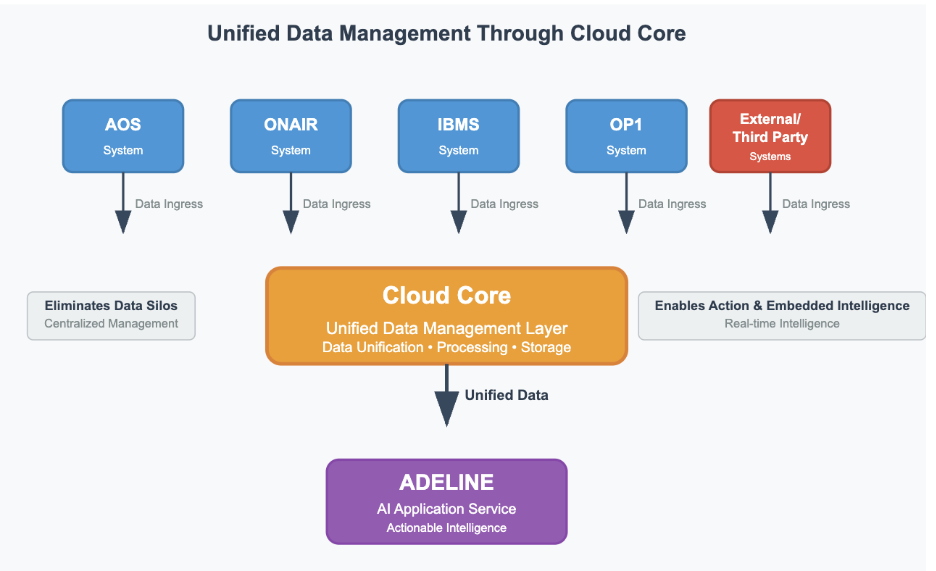

An AI is only as good as the data it’s trained on. Biased, siloed, or poor-quality data will inevitably lead to flawed and untrustworthy outputs. The second pillar of the Glass Box framework is a commitment to a transparent, unified, and well-governed data foundation. Adeline achieves this through CloudCore.

CloudCore: The Single Source of Truth

CloudCore is the central data layer for Adeline, designed to aggregate, store, and process data from across the Operative ecosystem, including AOS, OnAir, Operative One and IBMS, as well as external systems. It serves as the “single source of truth,” eliminating the data chaos that plagues converged media operations.

Key features of CloudCore that support data transparency include:

- Data Collection and Aggregation: CloudCore seamlessly ingests data from disparate sources, breaking down silos between Linear, Digital, and CTV operations. This provides a holistic view of inventory, performance, and revenue.

- Data Management and Governance: The platform provides robust capabilities to ensure data quality, integrity, and compliance. Policies can be defined and data lineage can be tracked, creating an auditable trail of how data is sourced and transformed before it is used by Adeline’s AI agents.

- High-Performance Data Access: CloudCore provides a low-latency access layer, ensuring that when Adeline makes a decision, it is based on the most current and accurate business data available.

This governed, unified data layer is critical for building trust. It ensures that Adeline’s insights are not based on a fragmented or skewed view of the business. When Adeline recommends a media plan that balances Linear and Digital inventory, that recommendation is based on a complete and auditable dataset that understands the full scope of a media company’s assets, a foundational requirement for fair and effective monetization.

Pillar 3: Decision Transparency (The Explanation)

Even with a transparent architecture and data foundation, the core question remains: why did the AI make a particular decision? The third pillar of the Glass Box framework is Explainable AI (XAI), which moves beyond providing an answer to explaining the reasoning behind it. Adeline is engineered not just to be an assistant, but an understandable colleague.

Conversational AI as an Interface for Explainability

Adeline’s AI Augmented Natural Language Interface is the primary tool for achieving decision transparency. Its “Query Mode” and “Chat Mode” functionalities allow users to engage in a dialogue with their data and with the AI itself. This transforms the user experience from passively receiving recommendations to actively interrogating them.

For example, after the Ad Sales Copilot generates a media plan, a user can ask follow-up questions to understand its logic:

- User: “Why did you prioritize CTV inventory over Linear for the 18-34 demographic?”

- Adeline: “Based on historical campaign data from CloudCore for this advertiser, the 18-34 demographic has shown a 35% higher engagement rate on CTV platforms. The selected inventory also provides the requested reach while staying within the specified CPM constraints of the RFP.”

This capability to provide the “why” behind the “what” is crucial. It allows human experts to validate the AI’s reasoning, build confidence in its outputs, and identify potential flaws in its logic.

Human-in-the-Loop (HITL) by Design

Adeline’s agentic architecture is designed for a phased evolution, from AI Assisted to Supervised Autonomy and ultimately Strategic Autonomy. Central to this evolution is the principle of Human-in-the-Loop (HITL). In the initial phases, the AI agent presents recommendations, but a human media planner retains full control and provides explicit approval before any action is taken in a system like AOS.

This is more than a safety measure; it is a core component of the trust framework. The HITL process ensures that human expertise and strategic context are always part of the decision, making the final outcome a collaboration between human and machine. This builds institutional knowledge and trust, paving the way for greater autonomy in the future.

Pillar 4: Bias Mitigation (The Guardrails)

Perhaps the most significant risk of “black box” AI in advertising is its potential to perpetuate and even amplify societal biases. An algorithm optimizing for clicks or conversions might learn from historical data that certain job ads are clicked on more by men, or that high-value product ads perform better in affluent zip codes. This can lead to discriminatory ad delivery that limits opportunities for marginalized groups. The Glass Box framework provides tangible guardrails to actively monitor and mitigate these risks.

A Framework for Fairer Monetization

Adeline’s architecture provides several mechanisms to combat algorithmic bias:

- Holistic Data Reduces Skew: By drawing from the CloudCore unified data platform, Adeline’s models are less susceptible to the biases inherent in fragmented datasets. A comprehensive view across all audiences and platforms provides a more balanced foundation for decision-making.

- Explainability Enables Auditing: The AI Augmented Natural Language Interface becomes a powerful tool for auditing. A revenue operations manager can actively probe for bias by asking questions like, “Show me the audience delivery breakdown by estimated gender and ethnicity for the ‘Financial Services’ campaign last quarter. How does this compare to the eligible population?” This allows for the proactive identification of delivery skews that need correction.

- Human Oversight Corrects Biases: The HITL workflow is the ultimate guardrail. A human planner, guided by principles of fairness and inclusion, must review every AI-generated plan and assess whether it is overly concentrated on one demographic. They should then adjust the plan to ensure a more equitable distribution, and this feedback helps refine the agent’s future performance. As the agent adapts and improves through sustained human oversight and usage, human users can experience the agent’s growing intelligence and therefore build confidence to gradually offload certain routine activities while maintaining oversight of critical decisions.

- Agentic Modularity Allows for Fairness-Aware Components: Adeline’s modular design makes it possible to integrate specialized “fairness-aware” algorithms or agents into the workflow. For instance, a Variance Reduction System—similar to what has been deployed for housing ads on other platforms—could be implemented as a dedicated agent that measures and adjusts ad delivery to ensure more equitable reach across demographic groups.

By providing these tools for visibility and control, Adeline empowers media companies to move beyond simply hoping their AI is fair, and instead actively manage it to achieve more equitable and responsible monetization.

Conclusion: Building the Future of Media on a Foundation of Trust

Artificial Intelligence is not a futuristic concept; it is a present-day reality that is reshaping the media landscape. For media companies to harness its full potential, they must move beyond a fascination with the technology itself and focus on building systems that are not only intelligent but also transparent, accountable, and trustworthy.

Operative’s Adeline platform, built on the principles of the Glass Box framework, provides the path forward. By embracing Architectural Transparency, Data Transparency, Decision Transparency, and proactive Bias Mitigation, Adeline demystifies AI. It transforms a potential “black box” liability into a trusted, collaborative partner that empowers sales, operations, and leadership teams to make smarter, faster, and fairer decisions. While AI will power the future of media monetization, success will depend on platforms like Adeline that are designed with demonstrable reliability and operational visibility as core principles.